Depth from a Single Image by Harmonizing Overcomplete Local Network Predictions

Ayan Chakrabarti, Jingyu Shao, Gregory Shakhnarovich

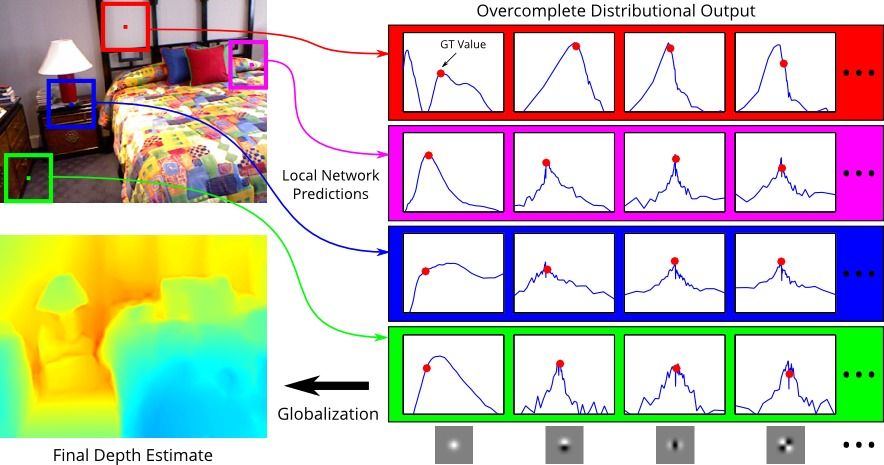

A single color image can contain many cues informative towards different aspects of local geometric structure. We approach the problem of monocular depth estimation by using a neural network to produce a mid-level representation that summarizes these cues. This network is trained to characterize local scene geometry by predicting, at every image location, depth derivatives of different orders, orientations and scales. However, instead of a single estimate for each derivative, the network outputs probability distributions that allow it to express confidence about some coefficients, and ambiguity about others. Scene depth is then estimated by harmonizing this overcomplete set of network predictions, using a globalization procedure that finds a single consistent depth map that best matches all the local derivative distributions. We demonstrate the efficacy of this approach through evaluation on the NYU v2 depth data set.

| Publication | NIPS 2016 [arXiv] | |

| Downloads | Source Code | [GitHub] |

| Trained Neural Model | [HDF5 859 MB] | |

| NYUv2 Test Set Results | [MAT 499 MB] |

This work was supported by the National Science Foundation under award no. IIS-1618021. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the authors, and do not necessarily reflect the views of the National Science Foundation.

This site uses Google Analytics for visitor stats, which collects and processes visitor data and sets/reads cookies as described here.